Accenture has 30,000 Claude-trained practitioners. Deloitte rolled out Claude to 470,000 employees while Cognizant deployed it to 350,000 more. Infosys signed its own major Anthropic deal last week, covering regulated industries. That is over one million practitioners already committed to Claude delivery, while most of their competitors are still reviewing governance frameworks and unsure where to place their agentic bets.

According to Menlo Ventures, Anthropic has already captured 40% of the enterprise AI market share, up from 24% at the start of 2025. The land grab is not coming, folks, it’s already happened. If your firm does not have a Claude delivery strategy built around trained practitioners, proprietary accelerators, and outcome-based pricing, you may already be on the scrap heap of labor-based services waiting for the incinerator.

Anthropic’s enterprise market share jumped from 24% to 40% in less than a year. That is not a trend… it is a takeover.

Claude’s ascent is not accidental. Four structural advantages are driving it:

First, Anthropic’s explicit safety and governance positioning unlocks regulated sectors like financial services, healthcare, and public sector where every other AI vendor is stuck in procurement limbo.

Second, Claude is increasingly agentic. It does not generate text and stop. It executes multi-step workflows, reasons across massive context windows, and acts as a participant in enterprise processes, not just a productivity add-on.

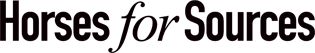

Third, Anthropic has distributed Claude through Amazon Bedrock and Google Cloud, making it available inside existing cloud relationships rather than requiring standalone commercial negotiations. That combination of trust, capability, and distribution is exactly what the services market needed to move from experimentation to scale. Not to mention Amazon is one of Anthropic’s investors.

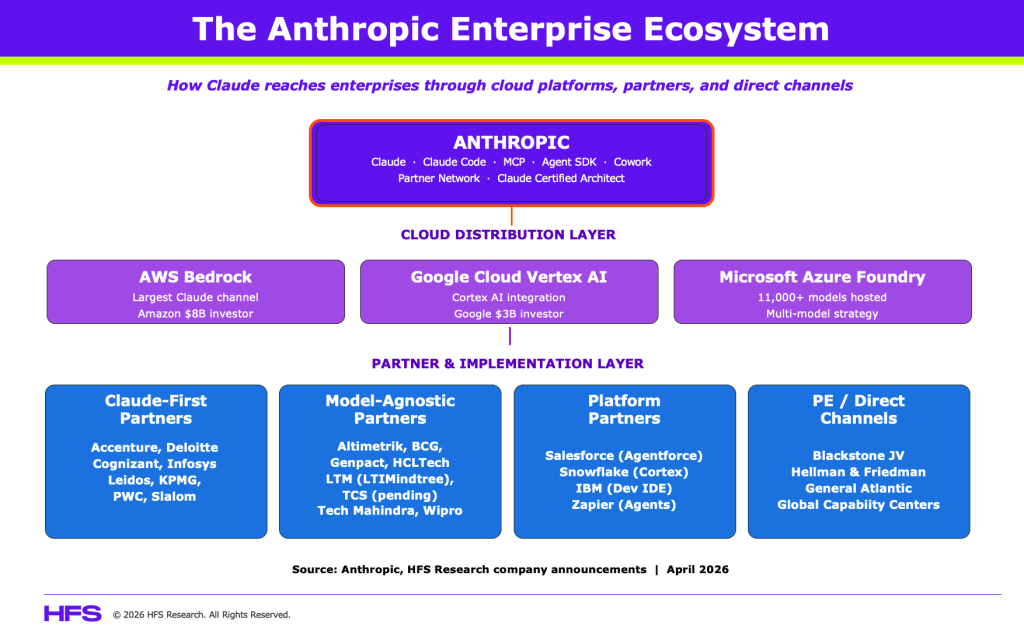

Fourth, Anthropic is becoming the most significant emerging AI Platform to close the Enterprise AI Velocity Gap. Our extensive research across the Global 2000 reveals that only 10% of enterprises deploy GenAI or agentic AI organization-wide today, and only a similar number report a cross-departmental rollout. We call this the AI Velocity Gap: individuals racing ahead with AI tools while enterprises remain gridlocked in governance committees, data silos, and change management debt. Claude, embedded into the delivery platforms of the likes of Accenture, Deloitte, Infosys, and Cognizant, is a significant mechanism through which many enterprises will eventually deploy to narrow that gap. The service providers that have embedded Claude deepest in their delivery models are positioning themselves to own the transformation budgets that follow.

Two camps are emerging in Anthropic professional services, but not in the clean, binary way many assume.

The first camp has moved decisively, building its own armies of Claude coders.

Accenture formed a dedicated Anthropic Business Group with 30,000 Claude-trained professionals, focused specifically on regulated industries where governance requirements are strictest. Deloitte deployed Claude to its entire global workforce of 470,000 across 150 countries in what became Anthropic’s largest enterprise rollout. Infosys integrated Claude into its Topaz AI platform and built a dedicated Anthropic Center of Excellence targeting telecom, financial services, and manufacturing. Cognizant has deployed Claude across 350,000 employees, aligning Claude models, Claude Code, MCP, and the Agent SDK with its core engineering platforms, and is developing vertical solutions starting with financial services through its Agent Foundry platform to embed agentic workflows into regulated enterprise environments. Slalom announced a formal partnership with Anthropic in November 2024 focused on ethical AI deployment on AWS and has a live case study with United Airlines, where Slalom used Amazon Bedrock and Claude to build AI-powered flight update customization.

Two additional consulting firms belong in this camp. PwC announced a formal collaboration with Anthropic in February 2026, focused on embedding Claude, including Cowork, Claude Code, Opus 4.6, and Sonnet 4.6, into regulated enterprise environments in finance and healthcare. PwC is developing industry-specific plugins, risk frameworks, and workflow redesigns around Claude, positioning it as more than a model-agnostic integrator. KPMG has partnered with Anthropic specifically on Claude for Life Sciences, helping clients integrate Claude into scientific research, clinical workflows, and regulatory processes.

These firms are not just offering clients access to Claude. They are building proprietary delivery infrastructure and repeatable assets around it. That is a fundamentally different competitive position.

The second camp is taking the hyperscaler path, embedding Claude via Amazon Bedrock alongside other foundation models, positioning as integration specialists rather than dedicated Claude practitioners.

Genpact, HCLTech, Wipro, Tech Mahindra, Altimetrik, LTM, and others fit here. The model-agnostic approach preserves flexibility but creates a real commoditization risk. When every provider can access Claude through the same cloud channel, differentiation has to come from proprietary accelerators, domain IP, and managed services layers. Building those assets takes time that is running out fast.

TCS, the largest Indian IT services firm with annual revenue of $30 billion, is notably absent from Camp 1 but moving fast. Its COO confirmed in April 2026 that TCS is working significantly with Anthropic and that a formal partnership announcement is expected soon. The parallel is striking: TCS and Anthropic now operate at roughly the same revenue scale, yet one sells human labor while the other sells the technology replacing it.

IBM represents a third path worth watching: productizing Claude inside enterprise development tools. That creates stickiness that project-based deployments cannot match and positions IBM to capture recurring revenue from AI-embedded workflows rather than one-time transformation fees.

A further competitive dynamic deserves attention: OpenAI’s push to formalize its ecosystem of services partners. Over the past year, it has deepened multi-year collaborations with firms such as McKinsey, BCG, Accenture, and Capgemini to scale enterprise adoption of its models and emerging agent platforms.

This creates a more nuanced competitive landscape. Large providers like Accenture are clearly hedging across both Anthropic and OpenAI, while strategy firms such as McKinsey, BCG, and Bain have built strong alignment with OpenAI’s enterprise roadmap. However, none of these partnerships are exclusive, and most services firms are deliberately maintaining multi-model strategies.

The reality is not a clean split between “Claude camps” and “OpenAI camps.” Systems integrators are increasingly supporting multiple model ecosystems, often shaped by hyperscaler relationships such as AWS, Microsoft, and Google.

Many Global Capability Centers (GCCs) are building direct Anthropic capability from inside the enterprise.

Many GCCs, particularly the 1,700-plus operating in India, are no longer back-office execution units. The most advanced GCCs are functioning as internal AI innovation labs, piloting Claude directly through Bedrock or enterprise agreements, and building proprietary workflow automation that bypasses the need for third-party services firms entirely (see earlier article). When a GCC at a major US financial institution can deploy Claude Code across its engineering team, build MCP integrations to its internal data stack, and redesign its own processes without engaging an Accenture or an Infosys, the addressable market for traditional services engagement shrinks from below, not just from above. Services firms must position themselves as the architects of GCC AI strategy, not just the vendors GCCs replace. That requires a fundamentally different client conversation than the one most account teams are having today.

A related disintermediation threat is emerging from private equity. Anthropic is in discussions with Blackstone, Hellman and Friedman, and General Atlantic to create a joint venture targeting up to one billion dollars in funding, with Anthropic contributing two hundred million. The venture would deploy Claude across PE-backed portfolio companies in a Palantir-style model combining software licensing with implementation consulting. If completed, this creates a distribution channel that bypasses traditional services firms entirely for a large segment of the enterprise market.

Microsoft is playing a different game entirely: hedging across every frontier model so it can ride whichever one leaps forward next.

Microsoft deserves its own analysis here because its strategy does not fit neatly into any of the camps outlined above. While services firms are choosing either deep Claude commitment or model-agnostic flexibility, Microsoft is building the platform layer underneath all of them. Its Azure AI Foundry now hosts over 11,000 models, including Claude through a direct Anthropic partnership, alongside OpenAI’s GPT family, Meta’s Llama, Mistral, Cohere, and Microsoft’s own emerging MAI models. Copilot itself is shifting from an OpenAI-only product to a multi-model architecture that can compare and cross-check responses across models. Microsoft is not betting on one model winning. It is betting that no single model will win permanently, and that the real margin lives in the orchestration, governance, and distribution layer that sits between frontier models and enterprise workflows.

This is a structurally sound hedge, and services leaders must understand Microsoft’s strategy to get the most from their relationship (and the services they’ll bring to market with Microsoft). When Microsoft makes Claude available through its Azure AI Foundry alongside its own MAI models, it commoditizes the model layer for enterprise buyers. This strategy will result in switching cost between frontier models dropping for everyone. Instead of models driving the value, the value migrates upward to whoever controls the integration fabric, the security and compliance overlay, and the agentic workflow design. In 4D chess, Microsoft is positioning itself to be that control layer across the entire enterprise stack, from Azure infrastructure to Microsoft 365 to GitHub to Dynamics. If it can execute, the services firms that built deep single-model practices will face a platform owner that can swap models underneath its customers without anyone noticing. That is the quiet threat inside Microsoft’s multi-model strategy: it turns model-specific expertise into a depreciating asset. (If you are a service provider leader read that last sentence again!)

But its not all rainbows and unicorns. The counter-argument is execution risk for Microsoft. Its Copilot adoption has underwhelmed, with only 15 million subscriptions against 450 million commercial seats, and Microsoft’s stock has pulled back sharply on concerns that its $100-billion-plus annual AI capex is not yet translating into proportional enterprise returns. Building your own MAI models while maintaining a $13 billion OpenAI partnership while also onboarding Anthropic and Mistral creates strategic complexity that no amount of Azure infrastructure can paper over. Services firms with deep Claude or OpenAI practices may find that their focused expertise is exactly what enterprise buyers want when the platform layer feels too broad and too uncertain to bet on alone.

The question for services leaders is not whether Microsoft’s hedge is smart (or necessarily a threat). It is whether your firm’s differentiation is deep enough to survive inside a platform that is designed to make your model-specific expertise interchangeable.

Claude is not in the lab anymore: it is already compressing costs and cycle times across financial services, healthcare, pharma, and software engineering.

The most important dynamics for services leaders is not the partnership announcements. It is what Claude is already doing inside enterprise processes. In financial services, Bridgewater’s investment research team is using Claude to draft Python scripts, run scenario analysis, and visualize financial projections, with the system designed to replicate junior analyst workflows and reducing time-to-insight by 50 to 70% on complex equity, FX, and fixed-income reports.

Data Studios in cybersecurity, HackerOne has reduced vulnerability response time by 44% using Claude. In pharmaceutical development, Novo Nordisk, the maker of Ozempic, was averaging just 2.3 clinical study reports per writer annually, with each report running up to 300 pages, and has used Claude to transform that bottleneck. In telecom, TELUS deployed Claude to 57,000 employees, giving them direct access to AI-powered workflows across developer, analyst, and support functions. In software engineering, Claude Code now holds over half of the AI coding market, enabling junior developers to produce senior-level code and onboard in weeks instead of months.

Several additional deployments reinforce the pattern. Brex automated 75% of expense transactions using Claude on AWS Bedrock, achieving 94% policy compliance and saving 169,000 hours monthly, equivalent to $56.5 million in salary. Snowflake integrated Claude into its Cortex AI platform, achieving over 90% accuracy on text-to-SQL queries across more than 10,000 customer organizations. Zapier deployed over 800 internal Claude-driven agents, achieving 89% employee adoption and 10x year-over-year growth in Claude-powered tasks. TELUS, beyond its 57,000 employee deployment, has built over 13,000 AI-powered tools internally, saved more than 500,000 staff hours, and realized over $90 million in measurable business benefits. Salesforce made Claude the foundational model for Agentforce 360, its autonomous AI agent platform that crossed $500 million in annual recurring revenue with 330% year-over-year growth. Cox Automotive integrated Claude via Bedrock to generate personalized communications, doubling lead follow-ups and test drive appointments.

Claude Code is not a productivity tool. It is a direct substitution mechanism for the junior-to-mid engineering workforce that anchors the Indian IT services delivery pyramid.

Unlike standard AI coding assistants that suggest completions line by line, Claude Code operates agentically. It reads codebases, plans multi-file changes, executes terminal commands, runs tests, and iterates on failures without human intervention at each step. A junior developer using Claude Code is not a faster junior developer. They are operating with the output velocity of a mid-level engineer. A mid-level engineer using Claude Code closes the gap on senior-level architecture decisions. The economics of the delivery pyramid do not bend gradually under this pressure. They break.

Cognizant has already moved Claude Code, MCP, and the Agent SDK to the center of its engineering practice. That is the right instinct, and it points to what a transformed software delivery practice actually looks like: fewer bodies doing rote implementation, more architects governing agent workflows, more domain specialists translating business requirements into agentic task structures, and more QA and oversight roles ensuring that autonomously generated code meets compliance and security standards. The headcount does not disappear. It reshapes. The firms that lead this transition will capture premium margins. The firms that resist it will lose application development mandates to competitors who can deliver faster, cheaper, and at better quality with smaller teams.

These are not proofs of concept. They are production deployments in some of the world’s most demanding and regulated environments. The compression of skilled labor hours is already measurable, and it is accelerating. The services firms that understand this are repositioning the human role toward oversight, orchestration, and domain judgment. The ones still debating whether to pilot will face a client base that has already moved.

The real battleground is not which firm has a Claude deal. It is who can govern, integrate, and redesign work around agentic AI at enterprise scale.

Three disciplines will determine which service providers win the next wave of AI transformation revenue. First, workflow integration: connecting Claude securely to enterprise systems across SAP, Salesforce, ServiceNow, and other data repositories that currently sit in silos. Most enterprises do not yet have the foundation for this, which means the integration layer provides significant service value. Second, AI governance and oversight: building monitoring, approval flows, and audit trails into AI-enabled operations. HFS data confirms that AI growth hinges on how effectively organizations strengthen security, governance, and data control. Third, workforce redesign: determining the optimal human-to-agent ratio across business functions and restructuring roles, incentives, and metrics accordingly. In practice, this means replacing entry-level execution roles with four new archetypes: AI Workflow Architects who design the agentic task structures that replace manual processes; AI Output Validators who govern quality, compliance, and accuracy of autonomous outputs; Domain Translation Specialists who convert business requirements into agent-ready instructions; and AI Operations Managers who monitor multi-agent systems and escalate exceptions. These are not theoretical roles. They are the functions that determine whether AI deployment creates durable enterprise value or just generates liability at scale.

Anthropic’s Model Context Protocol is significant here. Standardized connectivity lowers the integration burden for models but raises the design burden. Someone still has to architect how those connections work inside complex, legacy-laden organizations. That architecture work is high-value, recurring, and defensible. It will not be commoditized as quickly as code generation or document drafting. Cognizant has made MCP a core part of its Claude deployment, using it to give AI agents standardized access to developer tools and enterprise data rather than treating each integration as a bespoke project. That is the right instinct, and the providers that follow it will build more durable margin than those still quoting FTEs.

The revenue model shift is also accelerating. When AI reduces the hours required to deliver a given output, clients will not accept time-and-material pricing. HFS Research data shows agentic AI investment is set to surge 38% in 2026 alone, and enterprises are demanding outcome-based models, AI operations management services, and verticalized solution packages. The providers that have retooled their pricing and delivery models around these structures will capture that spend. The providers still quoting FTEs will lose it. This is the Services-as-Software inflection point. The firms that survive the transition are those that stop selling access to practitioners and start selling guaranteed business outcomes delivered by a combination of human expertise, proprietary IP, and AI agents working in concert. That is a fundamentally different commercial model, a different margin structure, and a different conversation with the CFO. The firms that master it will not just retain their existing clients. They will take share from competitors who are still explaining why their FTE count is a feature rather than a liability.

Bottom line: The Claude services land-grab is firmly underway for the early leaders.

Accenture, Deloitte, Infosys, and Cognizant have collectively committed over one million practitioners to Claude delivery and are building proprietary accelerators, governance frameworks, and vertical solution factories around it. Claude is already cutting research cycle times by 50 to 70% at Bridgewater, slashing vulnerability response by 44% at HackerOne, and transforming clinical documentation throughput at Novo Nordisk. This is not future potential. It is current competitive reality.

The financial trajectory reinforces the urgency. Anthropic’s run-rate revenue surged from nine billion dollars at end of 2025 to over thirty billion dollars by April 2026. Enterprise clients spending over one million dollars annually doubled from five hundred to one thousand in under two months. Eight of the Fortune 10 are Claude customers. Claude Code alone reached two point five billion dollars in annual recurring revenue in nine months. The Claude Partner Network, backed by one hundred million dollars in Anthropic investment, now includes a Claude Certified Architect certification and fivefold growth in partner-facing technical staff. The window for establishing a competitive Claude practice is not narrowing. It has nearly closed.

Service providers that have not matched that commitment are not just behind on a feature. They are ceding the transformation budgets that will define industry positioning for the next decade. Stop forming committees to evaluate AI strategy and start building the delivery capability to execute one. Every quarter you delay is a quarter your clients spend getting comfortable with your competitor’s Claude practitioners instead of yours.

Posted in : Agentic AI, AGI, Artificial Intelligence, Business Process Outsourcing (BPO), Employee Experience, IT Outsourcing / IT Services, LLMs