Enterprise technology leaders are drowning in AI commentary. LLMs. Agents. Vibe coding. The analyst decks keep coming. But the hard question nobody is answering is this: who actually wires AI into your live systems, governs it in production, and makes it keep working when the AI software vendors leave the room? The answer is Forward Deployed Engineering (FDE). If your transformation strategy does not have it, you are building an AI theater, not an AI operating model.

93% of enterprises are stuck in AI pilot purgatory. The missing layer is not better models or bigger budgets. It is Forward Deployed Engineering, and the firms that crack it at scale will own the recurring revenue layer of enterprise AI.

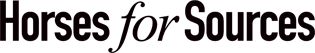

The Services-as-Software Flywheel brings together the AI technologies to steer firms into the AI era

The HFS Services-as-Software Flywheel has 4 accelerants: LLMs accelerate reasoning and code generation, agentic AI that orchestrates decisions across systems, vibe coding that turns business intent into working service agents, and Forward Deployed Engineers (FDEs) activate AI into real enterprise environments. The result is a compounding system where intent becomes production workflows, workflows generate data, and that data improves the next generation of agents.

The missing insight in many AI strategies is that velocity alone does not create enterprise value. The Services-as-Software flywheel requires an embedded execution layer that connects these technologies inside real operational systems. FDE forms that layer, ensuring the flywheel spins inside production environments rather than inside sandbox pilots. Here is what actually happens without FDE:

- LLMs summarize PDFs in sandboxed demos, disconnected from governed enterprise data.

- Agents sit in pilot mode indefinitely because nobody has designed the approval chains, audit trails, and escalation paths that regulated operations require.

- Vibe coding generates experimental agents at the business unit level with no architectural coherence, creating fragmentation and compliance exposure.

The Flywheel does not spin because there is no embedded engineering force to connect the components inside real systems. That is the dirty secret of AI services. The gap is not technological. It is operational.

Services-as-Software does not eliminate services. It embeds them deeper into the software. FDE is the mechanism that makes that shift real.

Palantir cracked this a decade ago. The ecosystem forming around it is a preview of the emerging Services-as-Software market.

Palantir built its competitive advantage not on model superiority but on proximity to operational reality. Forward deployed engineers embedded inside client environments, wiring models into live data, real permissions, regulatory controls, and the messy ontologies that reflect how enterprises actually function. They did not sell transformation roadmaps. They shipped production workflows.

The market is increasingly recognizing this model. Palantir’s share price has increased roughly 10× in the past two years, reflecting investor belief that the future of enterprise AI lies not just in models, but in the ability to embed those models into operational systems:

That approach is now being industrialized through AIP Bootcamps: structured engagements that take a team from a scoped problem to a working production deployment in 1 to 5 days. Not a proof of concept in a sandbox. A live workflow with real data and real controls. That changes the entire commercial dynamic.

FDE is not implementation – it is the engineering layer that makes AI governable.

There is a persistent misunderstanding in the market. FDE is often conflated with systems integration or technical implementation. It is neither. FDE is the discipline that turns AI capabilities into durable enterprise mechanisms. The Palantir model makes this concrete: FDE teams build ontologies that reflect how the enterprise actually operates, wire models into real data with real permissions, and design the governance architecture that keeps autonomous systems accountable.

What LLMs cannot do on their own:

- Connect themselves to governed enterprise data with appropriate permission structures.

- Navigate the regulatory architecture of specific industries, from HIPAA to Basel III to GDPR.

- Design and enforce human approval chains for decisions that carry legal or financial consequences.

- Monitor for model drift, output degradation, or ontological inconsistency over time.

- Maintain alignment between the AI layer and the evolving business logic it is meant to serve.

FDE teams own all of that. The cost of not having them is not a missed optimization. It is a compliance event, a reputational failure, or an AI system that quietly degrades until someone notices the outputs stopped making sense.

LLMs accelerate. FDE operationalizes. Without the second, the first is a liability, not an asset.

Agentic AI without FDE governance is not transformation. It is risk accumulation.

Agentic AI is the most significant shift in enterprise technology in a generation. Agents can trigger workflows, coordinate decisions across systems, execute multi-step logic, and enforce compliance rules in real time. But autonomous workflow proliferation without governance architecture is dangerous in regulated industries.

A financial services firm cannot allow agents to make credit decisions without explicit decision rights, immutable audit trails, escalation paths, and human override mechanisms. A healthcare system cannot let clinical workflow agents operate without continuous performance monitoring and documented accountability chains. This is not a chatbot problem. It is a systems engineering problem, and FDE is the only delivery model currently designed to solve it at enterprise scale.

- Ontology design that reflects how the enterprise actually operates, not how a vendor template assumes it does.

- Decision rights mapping documenting who and what can authorize each class of agent action.

- Continuous performance monitoring that catches drift before it becomes a compliance failure.

- Human-in-the-loop override architectures are designed for operational teams, not technical administrators.

- Escalation path engineering that routes exceptions to the right humans at the right level of urgency.

Vibe Coding creates velocity. FDE prevents it from becoming chaos.

Vibe coding lowers the barrier to building service agents to near zero. Business analysts can express intent and receive working agent code in return. That is a structural change in enterprise operating capacity. It is also a fragmentation risk without an engineering discipline layer.

When every business unit spins up agents independently, you get redundant logic across siloed codebases, compliance exposure from agents built outside the governance perimeter, and an AI estate that is technically diverse but operationally unmanageable. The firms in the Palantir ecosystem, building reusable ontology libraries and control frameworks for specific verticals, are creating precisely the discipline layer that makes vibe coding sustainable. That is not a feature. It is a defensible competitive position with real switching costs attached.

- Standard patterns that teams build within, not around.

- Reusable ontologies that maintain consistency across business unit deployments.

- Version control and change management frameworks designed for agent-based systems.

- Guardrails that catch compliance and security issues before deployment, not after.

The Palantir AIP (Artificial Intelligence Platform) Bootcamp is the most important commercial innovation in enterprise AI services right now.

In a Services-as-Software market, the client is not buying a transformation roadmap. They are buying working outcomes: claims triage that runs autonomously, supply chains that self-correct in real time, and compliance systems that audit continuously.

The AIP Bootcamp proves this model is real: a structured engagement, one to five days, that lands a specific workflow in production with real data and real controls. Instead of selling a roadmap, you sell a working workflow, and the client sees production capability before committing to scale. That changes the entire conversation about what AI services should cost and how they should be structured.

The downstream commercial implications are structural:

- Sales cycles compress because proof-in-production replaces proof-of-concept theater.

- Pricing shifts from time-and-materials to outcome-based or platform-plus-run structures.

- Margin structures change because expertise density replaces labor volume as the core economic driver.

- Recurring revenue replaces project revenue because deployed workflows require continuous operation, monitoring, and evolution.

FDE-service providers are no longer selling hours. They are selling production systems that keep delivering outcomes. That distinction separates the AI platform builders from the AI plumbers.

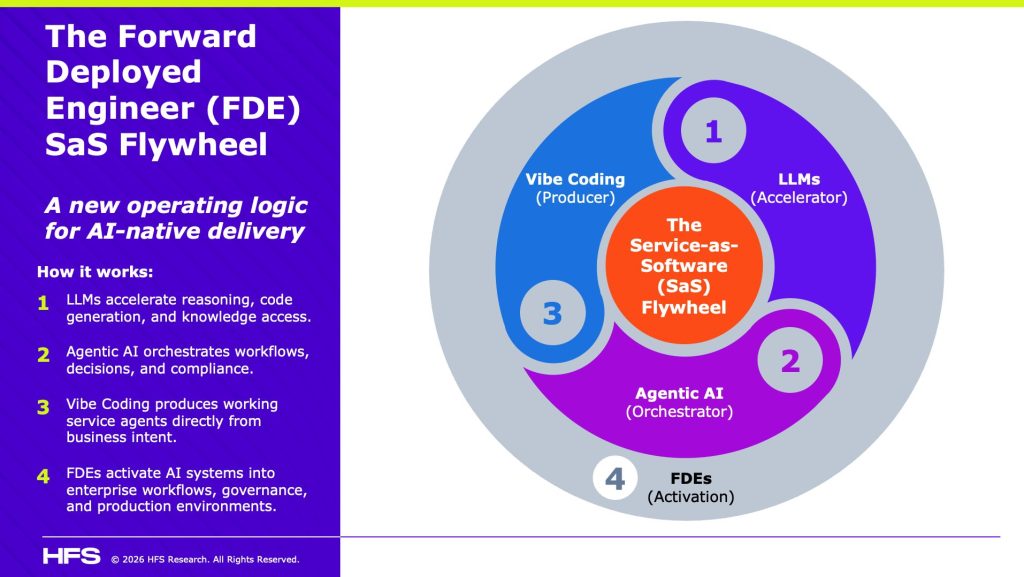

The partner lineup is significant not just for who is in it, but for how it is splitting: strategy-to-execution consultancies on one side, industrial-scale integrators and operators on the other. That split is not accidental. It is the three-layer market structure forming in real time:

The three-layer market is forming now and market position is not guaranteed.

The Palantir partner ecosystem is the clearest early map of the market structure that will define enterprise AI services through the next five years. Three durable layers are forming, and the window to establish defensible position is narrowing.

Layer A: Strategy and operating model redesign.

Bain, Deloitte, PwC, and KPMG will own the AI operating system transformation layer. They define how enterprises restructure around AI-enabled workflows, with Palantir and other platforms as execution substrates. Competitive differentiation is proximity to senior leadership and the organizational change capability built over decades.

Layer B: Build and integrate.

Accenture, Capgemini, Infosys, and Cognizant will compete on certified delivery capacity, vertical industry accelerators, and speed-to-production. The winners will build the largest libraries of reusable ontologies, workflow templates, and controls frameworks for specific verticals. Switching costs accumulate here, and margin density improves over time. Accenture’s preferred global partner positioning signals a land-and-scale economics model already pulling away from the field.

Layer C: Run and govern.

This is where Services-as-Software becomes genuinely recurring. Rackspace has made the most explicit move here, positioning governed managed operations as a production service with operational SLAs. As more workflows go live, demand for disciplined AI estate management becomes a standalone commercial category with high switching costs and defensible margin.

One critical dynamic cutting across all three layers: government and regulated industries will disproportionately drive spend. Palantir’s center of gravity remains in defence, intelligence, and regulated enterprise, and it is expanding. Partners with existing clearances, regulatory delivery experience, and government relationships have a structural advantage that pure commercial integrators will struggle to replicate quickly.

The ontology arms race has already started, and the winners will be obvious within 18 months.

Foundry’s ontology concept, modelling the enterprise as an interconnected operational system, is the stickiest element in the platform. Partners building deep, reusable ontologies for specific verticals are not just accelerating delivery. They are creating lock-in that travels with the client relationship and compounds with every additional use case deployed.

- Deloitte is combining its own assets with Foundry and AIP to create solution factory economics with accelerated time-to-value.

- Accenture is building certified talent at scale to establish the largest industrialized delivery capacity in the market.

- Cognizant is targeting healthcare operations specifically through the TriZetto combination, creating vertical depth rather than horizontal breadth.

- Rackspace is building the managed operations layer that everyone else will eventually need to hand off to a specialist.

The firms still assembling their Palantir partnership and staffing for generic Foundry delivery are already behind. Ontology depth, workflow libraries, and delivery track record cannot be purchased quickly. The advantage is compounding in favor of early movers.

As AI-assisted building accelerates, services differentiation moves further up-stack into domain architecture, accountability frameworks, and measurable outcome guarantees. Providers competing on implementation capacity will find the floor dropping under them.

The brutal arithmetic: expertise density wins, labor leverage loses.

Enterprise technology leaders evaluating their services relationships need to ask a direct question: is this firm’s growth model built on expertise density or labor leverage? The answer determines everything about value delivery in an AI-driven market.

Traditional IT services scaled revenue by scaling headcount. LLM acceleration and agentic automation are compressing the labor input required per outcome delivered. A provider whose economics depend on headcount growth faces a structural margin problem regardless of what their AI partnership announcements say.

FDE-style delivery inverts the model: smaller squads, higher context density, faster deployment, higher-value outcomes, and recurring run revenue from systems they operate. The Palantir partner firms moving fastest on this are growing their expertise density and workflow libraries, not their headcount. That is the Services-as-Software endgame.

You are not choosing between AI vendors. You are choosing between providers who can deploy AI into production and those who will keep you in the pilot phase indefinitely.

The Bottom Line: Stop treating FDE as optional, it is critical to activate your AI systems and capabiities

Every quarter your enterprise spends in pilot mode is a quarter your competitors are driving production AI advantages. Demand FDE-capable delivery from your services partners, and measure them on production deployments, not roadmap slides.

If a partner cannot show a working workflow in your live systems within 90 days, they are not your AI transformation partner. They are your most expensive source of false confidence. The Palantir partner ecosystem has already shown what production-first delivery looks like. There is no excuse left for settling for anything less.

Posted in : Agentic AI, Artificial Intelligence, Business Process Outsourcing (BPO), Forward Deployed Engineering, GCCs, GenAI, Generative Enterprise, IT Outsourcing / IT Services, LLMs, OneOffice, Vibe Coding