Every enterprise today is using some form of AI, but only one in five has embraced agentic AI to actually make decisions. This is not a technology problem, but a trust problem.

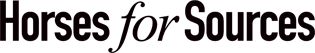

Recent research covering 545 enterprise decision makers across the Global 2000 reveals 78% give very little/no autonomy to agentic AI:

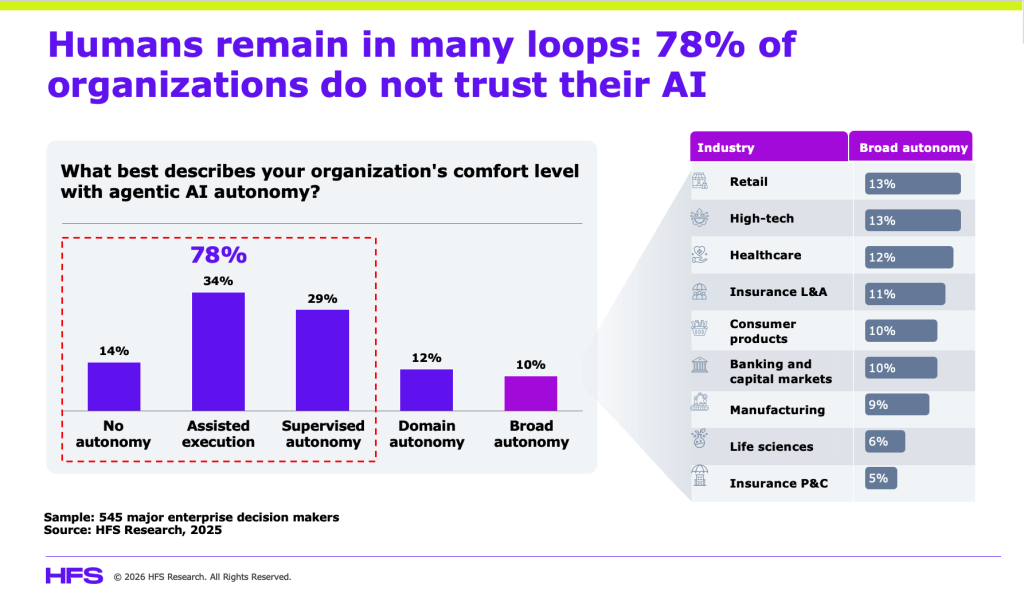

The HFS AI Trust Curve (below) maps the four stages every enterprise CIO or Chief AI officer must traverse to get from “the model works” to “we act on what it tells us.” Understanding where you are on this curve and what is keeping you stuck is the most important AI question your leadership team is not asking.

The HFS AI Trust Curve: Four Stages, Most Enterprises Never Leave Stage 2

The HFS AI Trust Curve is not a maturity model in the traditional sense. It does not reward effort or intent. Instead, it rewards an organization that achieves an outcome in which AI can influence decisions. Each stage has a defining question, a failure pattern, and a KPI that reveals where trust actually stands:

Source: HFS Research (qualitative) analysis – Data modernization and AI Horizons Study

To put things into perspective, consider a mid-sized consumer goods company delivering a $3B personal care brand with operations across 15 markets. This company’s story, laid out along this trust curve, is almost universal.

Stage 1. Model Confidence: Can the AI model work?

A $3B personal care brand operating across 15 markets builds an AI-powered demand forecasting model. It hits 87% accuracy in back-testing, outperforming the legacy statistical model by 14 percentage points. The Chief Digital Officer declares victory and the AI program is officially launched.

This is Stage 1. The KPI is model accuracy, which is necessary but not sufficient. What looks like an AI strategy is still an engineering achievement. Business stakeholders are impressed, but not yet converted, and that gap is what drives everything that follows.

Stage 2. Data Credibility: Do we believe the inputs?

Three months in, the VP of Supply Chain notices the AI’s demand signal for a core SKU diverges sharply from the regional sales team’s planning deck. The data science team traces it to a mismatch in how “sell-in” versus “sell-out” is defined across systems. The regional sales director has been using a different data set for two years and considers his version the gold standard. Now there are two dashboards, two answers, and a model that is technically correct but organizationally contested. AI has inherited a problem humans created.

The Stage 2 KPI now becomes the reconciliation effort: the time spent resolving competing definitions and ownership disputes. For this consumer goods company, the data fight is a symptom of a governance failure that requires a conversation between the CFO, Chief Supply Chain Officer, and CDO. It has nothing to do with an ETL pipeline (structured data workflow). Enterprises that treat Stage 2 as an engineering problem are guaranteeing a ceiling on everything AI could achieve.

Stage 3. Behavioral Trust: Will people actually act on it?

The personal care brand resolves most of the data disputes, or at least calls a truce.. The model is redeployed. Regional planners are trained. And then, in the next planning cycle, something quietly damning happens. The planners pull the AI recommendation, note it, and then proceed to build their own bottom-up forecast in Excel, adjusting for “local market intuition” and “factors the model doesn’t understand.” The AI output is printed in the deck as Appendix B, but nobody references it in the meeting.

This is Stage 3. The danger zone. When AI becomes advisory only, trust has not crossed the curve. It has essentially stalled at the edges.

The override rate, i.e., the percentage of AI recommendations that are modified or ignored in final decisions, shoots up to 75%. Senior leadership interprets this as a change management problem, which it is most definitely not. It is a symptom of unresolved credibility gaps from Stage 2 and of a deeper structural reality: the planners are not rewarded for trusting the model. They are rewarded for hitting their numbers. If the model is wrong and they follow it, the accountability falls on them. That incentive structure essentially turns rational humans into override engines.

Stage 4 – Decision Reliance: Is AI allowed to influence outcomes?

Stage 4 looks different. In this scenario, the consumer goods brand’s new Chief Supply Chain Officer makes a conscious structural change. AI-generated demand signals become the baseline for all planning conversations. Planners must log overrides with documented rationale. Performance reviews are starting to include a metric on how well AI recommendations correlate with actual outcomes. And whether human adjustments added value (or subtracted it). Within two quarters, override rates drop to 30%.

The KPI here is time-to-trust, i.e., how quickly does an AI-generated insight translate into an actual decision? In Stage 4 enterprises, this number is tracked. In Stage 3, it is not even a concept yet.

The effectiveness of Stage 4 maturity is not that AI is always right. It is that the organization has accepted that AI creates value only when it is allowed to be wrong before it is right. This stage requires institutional courage that most enterprises have yet to find. The reality is that Enterprise accountability structures still punish the person who trusted a model that missed, while quietly ignoring the person who ignored a model that was right.

The four discussed KPIs across the four stages are your trust matrix

The four trust-curve KPIs, i.e., model accuracy, reconciliation effort, override rate, and time-to-trust, do not tell you how good your AI is. They tell you where trust is actually breaking down. Presented together, they form an honest picture of whether your enterprise is genuinely adopting AI to realize its full potential.

Most AI program dashboards obsessively report the first KPI and ignore the other three, creating a blind spot. Reconciliation effort and override rate are KPIs enterprises actively avoid measuring, because what they reveal is an uncomfortable truth about human shortcomings, including contested data ownership, unresolved governance failures, and business users who have quietly concluded the AI is not worth the risk of being wrong alongside it. In the consumer goods example, a single override rate measurement revealed a governance failure that two years of AI investment had papered over.

The plateau persists because of culture debt

Enterprises stall between Stages 2 and 3 not because the models are weak, but because the organization was designed for human-controlled decisioning. The capabilities that get you through Stage 1, experimentation and validation, are not the capabilities that move you into scaled, AI-driven execution. Technical teams can tune models. They cannot renegotiate data ownership with Finance. They cannot redesign incentives so planners trust machine-generated forecasts. They cannot build the institutional confidence required for leaders to stand behind an AI-informed decision that later proves imperfect.

The firms breaking through the curve are not doing so because they have superior algorithms. They are doing so because leadership has resolved the human questions: Who owns the data? Who owns the insight? Who owns the outcome? Until those answers are explicit, AI remains advisory theater.

The Bottom Line: Every day your AI sits in recommendation mode is a day your competitor is operationalizing theirs. That gap is culture debt, and it compounds faster than technical debt because it hides behind governance language and “risk management.”

Instrument your AI deployments. Measure override rates. Track how often outputs are second-guessed or manually reconciled. Surface where decision rights are being pulled back to humans by default. Then follow those signals upstream to the incentive misalignments and trust deficits they reveal.

Stage 4 is not unlocked by better prompts or bigger models. It is unlocked by organizational honesty. This is not a technology bottleneck, it is a leadership one.

Posted in : Agentic AI, Artificial Intelligence, Business Data Services, Data Science